Why adding ethics to tech spells success with AI

Will automation replace or augment the humans in the loop? Can a machine be trusted to make decisions without human oversight? How does artificial intelligence sit alongside the principles of accountability and transparency?

As the drive towards robotic process automation (RPA) and artificial intelligence (AI) gathers pace, these questions come to the fore. This is clear from a new report, “AI momentum, maturity and models for success,” published by SAS, Accenture Applied Intelligence and Intel with Forbes Insight, based on a survey of 305 CIOs, CTOs, CAOs, CDOs and data scientists.

It found that 70% of organisations are conducting ethics training for the users of technology, with a further 19% considering this. As Dr Iain Brown, head of data science practice at SAS, told DataIQ in a discussion of the findings, “they are not just working on the automation itself, but on how to do it in an ethically-sound way.”

He identified two important dimensions of AI which need to be subject to ethical scrutiny: firstly, how decisions are made using the technology, and secondly, what impact AI has on employees. This is reflected in the survey’s finding that 63% of respondents have an ethics committee in place that reviews its use of AI, with 13% considering this.

That figure seems high - DataIQ is aware of a bare handful of such committees in the UK - but may reflect an evolution from existing governance and compliance practices. As Brown pointed out: “For financial services, they have had internal regulation and validation committees and internal teams. Large UK banks also have ethical committees in place focused on the use of data under GDPR looking at how they are using personal information in their decision-making process. That is because of the key articles on automated decision-making. So organisations are changing, especially those which are further down the path.”

This maturity curve is an important aspect of the AI momentum examined in the report. It found that 32% have fully deployed AI in multiple use cases, while 14% have rolled out a single use case, compared to 28% who are still experimenting and 14% who are in a sandbox.

“Ethics is a profound step to take - it is an evolution in thinking.”

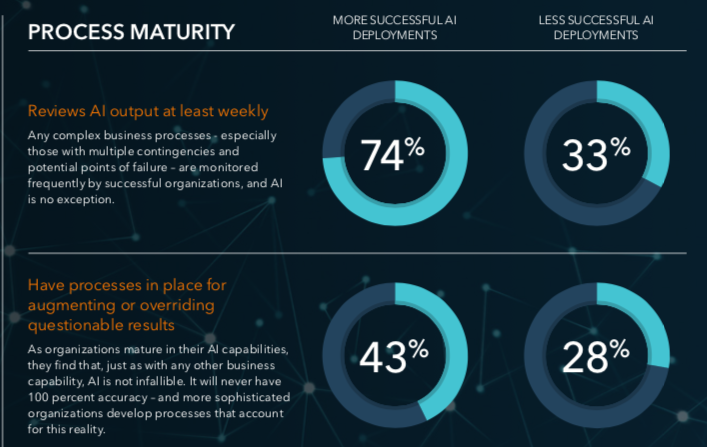

Notably, among organisations which have been more successful in their deployments, 74% are reviewing the outputs from AI at least weekly (see Figure 1). By contrast, in less successful deployments weekly reviews only happen 33% of the time. This suggests that there is less trust in the accuracy of AI among those with the greatest experience of it - this group routinely augments or overrides its outputs.

This makes the role of ethics committees even more significant as AI and automation take on many routine tasks. “A good example is credit risk and deciding who to make offers to - that area in financial services has been very well controlled and uses simple models so they can be explained to customers who have not been offered a loan. With the evolution of AI and more complex methods of decision-making, it is harder,” said Brown. “Ethics is a profound step to take - it is an evolution in thinking.”

SAS is supporting clients in putting an ethical framework in place ensuring they understand how AI works and how to explain its predictions. It subscribes to a framework of ethics called FATE, derived from software development and supported by major players such as Microsoft. It stands for fairness, accountability, transparency and explainability.

Fairness relates to the absence of bias in the way decisions are made. Accountability is a principle found not just in GDPR, but in banking regulations, which requires senior executives to have personal responsibility for actions and decisions. Transparency is one of the biggest challenges for AI, because it can so easily be a black box solution which nobody fully understands. Explainability should be a plain English output from the previous step.

Arriving at this level of ethical consideration may be relatively straightforward in the early stages of implementation since AI is automating processes and decisions that are already being made. It is notable that 71% of organisations in the survey are leading with deployment of AI into external marketing and social media, while 66% are running it in their marketing and sales, and 61% in customer relations, such as chatbots.

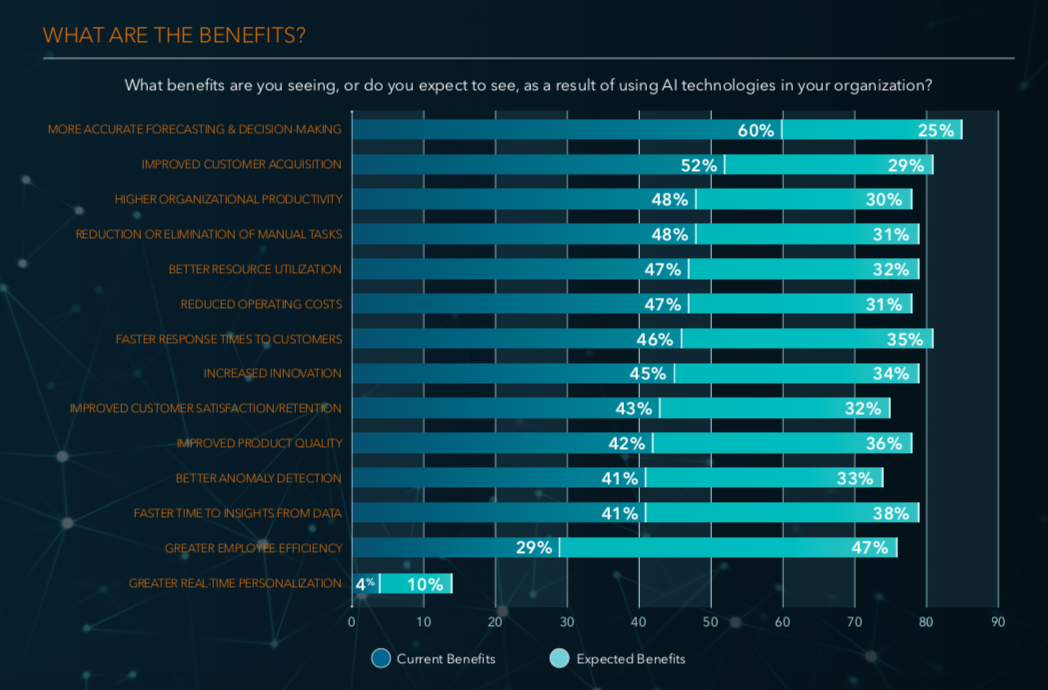

47% of organisations expect benefits from AI in employee efficiency.

Where ethics become more complex are in deployments that affect individuals directly, such as workforce management which 60% mentioned. As Figure 2 shows, nearly half of organisations expect to see benefits from using AI to drive employee efficiency.

Where ethics become more complex are in deployments that affect individuals directly, such as workforce management which 60% mentioned. As Figure 2 shows, nearly half of organisations expect to see benefits from using AI to drive employee efficiency.

Brown believes that many of the fears expressed about the impact of AI on jobs are based on a fear of the unknown. “Automation is not just replacement, but augmentation. The attitude we see among analysts, for example, is that they are happy to give jobs away if they are repetitive, but they also have concerns about job security,” he said.

“Many organisations see advantages in automation for their workforce. Employees want to do exciting things like data science, not mundane tasks. Robotic process automation takes simple tasks that are repetitive and allows analysts to focus on statistical tasks. You don’t want highly-skilled STEM graduates just joining data tables - that is inefficient.”

There will be impacts - the recent announcement by JP Morgan that all of its staff need to be able to code could force out those with other skills sets and homogenise the internal culture, for example. While it looks like a smart move now, its consequence are much harder to forecast.

That is why ethical training and ethical committees are a necessary correlative of automation and AI. Thinking through the consequences for decisions, customers and employees has to be about not just what is possible, but also what is right.

Brown believes striking the right balance will create the augmented human-machine working that advocates argue is the true benefit of this technology. He said: “Creativity is a long way off for AI - we are nowhere near strong or general AI, but weak AI is developing at pace. AI is not going to replace us.“

Did you find this content useful?

Thank you for your input

Thank you for your feedback

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024

David Reed

David Reed